7 Best OpenAI Whisper Alternatives for Speech-to-Text (2026)

Compare the best OpenAI Whisper alternatives for developers — from managed APIs like Deepgram and AssemblyAI to optimized open-source tools. Pricing, accuracy, and features compared.

TL;DR: The best OpenAI Whisper alternative for developers is Deepgram Nova-3 ($0.0043/min) for real-time streaming and production workloads, or AssemblyAI ($0.0025/min) for audio intelligence features like summarization and sentiment. For self-hosting, faster-whisper (free, open-source) runs Whisper 4x faster with lower memory. For a consumer Mac app that shows what packaged Whisper looks like, Voibe ($7.50/mo, $59/yr, or $149 lifetime) runs Whisper on-device for dictation.

Disclosure: Voibe is our product. We compare all tools factually and acknowledge where competitors excel.

OpenAI's Whisper model changed speech-to-text when it launched in 2022 as a free, open-source model. The demand for speech-to-text solutions continues to grow: the global voice-to-text market is valued at $9.66 billion and is projected to grow at 15–20% CAGR through 2030, driven by enterprise adoption, accessibility requirements, and AI assistant integration. But building a production speech pipeline around Whisper means managing GPU infrastructure, handling scaling, fighting hallucinations, and accepting that Whisper has no native streaming support. This guide covers seven alternatives — from managed APIs to optimized open-source implementations — for developers who want to stop maintaining their own Whisper pipeline. For background on how Whisper works, see our technical Whisper explainer.

Key Takeaways: Whisper Alternatives at a Glance

| Tool | Best For | Price per Minute | Key Strength |

|---|---|---|---|

| Deepgram Nova-3 | Real-time streaming | $0.0043 (pre-recorded) | Fastest streaming STT, self-hosted option |

| AssemblyAI | Audio intelligence | $0.0025 (Universal-2) | Summarization, sentiment, entity detection |

| Google Cloud STT | Multilingual at scale | $0.016 (Chirp 3) | 100+ languages, GCP integration |

| Amazon Transcribe | AWS ecosystem | $0.024 (standard) | AWS integration, medical transcription |

| Azure Speech | Microsoft ecosystem | $0.016 (real-time) | Whisper models hosted, custom models |

| faster-whisper | Self-hosted Whisper | Free (+ GPU cost) | 4x faster than stock Whisper, open-source |

| whisper.cpp | Edge/mobile deployment | Free (+ hardware) | C++ port, runs on CPU, mobile support |

Key Takeaway

Deepgram Nova-3 is the best managed API for real-time streaming at $0.0043/min. AssemblyAI offers the cheapest per-minute rate at $0.0025/min with audio intelligence features. faster-whisper is the best self-hosted option for teams already using Whisper.

Why Developers Look for Whisper Alternatives

Whisper is a powerful open-source model, but building production speech-to-text around it comes with real challenges:

- No native real-time streaming. Whisper processes audio in batch — it transcribes complete audio files, not live streams. Building real-time transcription on top of Whisper requires chunking audio, managing buffers, and handling partial results. Managed APIs like Deepgram and AssemblyAI offer streaming natively.

- GPU infrastructure costs and complexity. Running Whisper's large-v3 model requires 10GB+ VRAM. Self-hosting on cloud GPUs costs $1.00–$1.60/hour per instance. Scaling, monitoring, and maintaining GPU infrastructure adds DevOps overhead that managed APIs eliminate.

- Hallucination problems. Whisper can generate text that was never spoken — especially on silent or low-quality audio segments. This is a well-documented issue that requires post-processing workarounds in production systems.

- No speaker diarization. Whisper does not identify different speakers. Multi-speaker transcription requires pairing Whisper with a separate diarization model (e.g., pyannote.audio), adding complexity.

- Unreliable language detection. Whisper's language detection can misidentify short audio segments, leading to incorrect transcription language selection. This is problematic for multilingual applications.

- OpenAI has moved beyond Whisper. In March 2025, OpenAI released gpt-4o-transcribe and gpt-4o-mini-transcribe with lower error rates than Whisper. OpenAI now recommends gpt-4o-mini-transcribe over Whisper for new API users.

What to Look For in a Whisper Alternative

1. Managed API vs Self-Hosted

Managed APIs (Deepgram, AssemblyAI, Google, AWS, Azure) handle infrastructure, scaling, and maintenance. Self-hosted options (faster-whisper, whisper.cpp) give you full control but require GPU management. Choose based on your team's DevOps capacity and cost sensitivity at scale.

2. Real-Time Streaming Support

If your application needs live transcription (voice assistants, live captions, call centers), you need native streaming support. Whisper does not offer this. Deepgram and AssemblyAI provide WebSocket-based streaming APIs.

3. Pricing Model

APIs charge per minute of audio. Self-hosting charges per GPU-hour. Calculate your breakeven: at what volume does self-hosting become cheaper? For most teams processing under 5,000 hours/month, managed APIs are cheaper when you include DevOps costs.

4. Audio Intelligence Features

Some APIs go beyond transcription: speaker diarization, sentiment analysis, entity detection, summarization, and topic detection. If you need these, AssemblyAI bundles them into the same API call. With Whisper, you need separate models for each.

5. Accuracy for Your Domain

General accuracy benchmarks do not always predict your specific use case. Test candidates against your actual audio (accent, noise level, domain vocabulary). Deepgram Nova-3 Medical is purpose-built for clinical audio. Google Chirp 3 excels at multilingual content.

6. Latency Requirements

For real-time applications, latency matters more than raw accuracy. Deepgram leads on streaming latency. Self-hosted faster-whisper can achieve low latency but requires optimization. Batch transcription is latency-insensitive.

1. Deepgram Nova-3 — Best Managed API for Real-Time Streaming

Deepgram Nova-3 is the leading speech-to-text API for production applications that need real-time streaming. Deepgram's proprietary model is purpose-built for low-latency streaming — the feature Whisper fundamentally lacks. Nova-3 also offers a self-hosted deployment option for on-premises requirements.

Key Features

- Real-time WebSocket streaming with low latency

- Speaker diarization (add-on)

- Nova-3 Medical model for clinical audio

- Self-hosted deployment option

- 30+ language support

- Topic detection and summarization

Pros

- Fastest streaming speech-to-text API available

- 28% cheaper than Whisper API for pre-recorded English ($0.0043 vs $0.006/min)

- Self-hosted option for on-premises requirements

- Nova-3 Medical model for healthcare applications

Cons

- Proprietary model — no self-hosting the model itself (only the inference platform)

- Speaker diarization is a paid add-on, not included in base price

- More expensive than AssemblyAI at most volume tiers

- Multilingual pricing higher ($0.0052/min pre-recorded)

Pricing

Pay-as-you-go: $0.0043/min (English pre-recorded), $0.0077/min (English streaming). Growth plan: $0.0036/min (pre-recorded), $0.0065/min (streaming). Free tier: $200 credit. 1,000 hours/month cost: approximately $258 (pre-recorded) or $462 (streaming).

User Reviews

Deepgram is listed on G2 with strong developer sentiment for streaming performance.

Best For

Applications requiring real-time streaming transcription: voice assistants, live captions, call center analytics, and real-time meeting notes.

2. AssemblyAI — Best for Audio Intelligence Features

AssemblyAI combines transcription with audio intelligence — summarization, sentiment analysis, entity detection, and topic detection — in a single API call. For developers who need more than raw transcription, AssemblyAI avoids the need to build a separate LLM pipeline for post-processing.

Key Features

- Universal-2 model with 99-language support

- Audio intelligence: summarization, sentiment, entity detection, topic detection

- Speaker diarization (add-on at $0.02/hr)

- Real-time streaming via WebSocket

- $50 free credits (~185 hours of transcription)

Pros

- Cheapest base rate among major APIs at $0.0025/min ($0.15/hr)

- Audio intelligence features built into the same API call

- 99-language support with Universal-2

- Generous free tier ($50 credits)

Cons

- Charges based on session duration, not audio length — real-world costs can be ~65% higher for short audio segments

- Add-on features (diarization, sentiment, summarization) increase total cost

- No self-hosted deployment option

- Streaming latency higher than Deepgram for real-time applications

Pricing

Universal-2: $0.0025/min ($0.15/hr). Add-ons: diarization +$0.02/hr, summarization +$0.03/hr, entity detection +$0.08/hr. Free tier: $50 credits.

User Reviews

AssemblyAI is listed on G2 with positive reviews for developer experience and documentation.

Best For

Developers who need transcription plus audio intelligence (summarization, sentiment, entities) without building a separate processing pipeline.

3. Google Cloud Speech-to-Text (Chirp 3) — Best for Multilingual at Scale

Google Cloud Speech-to-Text with the Chirp 3 model offers the broadest language support (100+ languages) among commercial APIs. For teams already on Google Cloud Platform, STT integrates natively with other GCP services.

Key Features

- Chirp 3 model with 100+ language support (GA in 2025)

- Real-time streaming and batch transcription

- Speaker diarization

- Dynamic batch option (75% cheaper, up to 24hr delivery)

- Deep GCP integration (BigQuery, Cloud Storage, Pub/Sub)

Pros

- Broadest multilingual coverage among APIs

- Dynamic batch pricing is extremely cost-effective ($0.004/min)

- Native GCP ecosystem integration

- Free tier: 60 minutes/month

Cons

- Standard pricing is expensive at $0.016/min (4x Deepgram, 6x AssemblyAI)

- GCP billing complexity for non-Google shops

- Chirp 3 available only through V2 API

Pricing

Standard: $0.016/min. Dynamic batch: $0.004/min (up to 24hr delivery). Volume discounts available. Free tier: 60 min/month.

Best For

Teams on GCP needing multilingual transcription across 100+ languages, or batch workloads where 24-hour delivery is acceptable.

4. Amazon Transcribe — Best for AWS Ecosystem

Amazon Transcribe is AWS's managed speech-to-text service. Amazon Transcribe Medical is purpose-built for healthcare applications with HIPAA eligibility. For teams on AWS, Transcribe integrates natively with S3, Lambda, and other AWS services.

Key Features

- Amazon Transcribe Medical for HIPAA-eligible clinical transcription

- Real-time streaming and batch modes

- Custom vocabulary and custom language models

- Speaker diarization

- Deep AWS integration

Pros

- HIPAA-eligible medical transcription model

- Custom vocabulary support — closest to building domain-specific models

- Native AWS ecosystem integration

- Free tier: 60 minutes/month for 12 months

Cons

- Most expensive major API at $0.024/min (standard)

- AWS billing complexity

- Fewer audio intelligence features than AssemblyAI

Pricing

Standard: $0.024/min. Medical: $0.0375/min. Free tier: 60 min/month for 12 months.

Best For

Teams on AWS needing deep service integration, or healthcare applications requiring HIPAA-eligible transcription.

5. Azure Speech Services — Best for Microsoft Ecosystem

Azure Speech Services hosts Whisper models alongside Microsoft's own speech models, giving you a choice. For teams already on Azure, Speech Services integrates with the broader Azure AI ecosystem. Azure is the only major cloud that lets you run Whisper as a managed service without self-hosting.

Key Features

- Hosted Whisper models (no self-hosting required)

- Microsoft's proprietary speech models as an alternative

- Custom Speech for building domain-specific models

- Real-time streaming

- Azure ecosystem integration

Pros

- Run Whisper without managing GPU infrastructure

- Custom Speech allows domain-specific fine-tuning

- Can use either Whisper or Microsoft's own models

- Free tier: 5 hours/month (real-time), 1 hour/month (batch)

Cons

- Pricing at $0.016/min is above Deepgram and AssemblyAI

- Azure billing and service management complexity

- Fewer audio intelligence features than AssemblyAI

Pricing

Real-time: $0.016/min. Batch: $0.0108/min. Free tier: 5 hours/month (real-time).

Best For

Teams on Azure who want managed Whisper without self-hosting, or those needing custom speech model training.

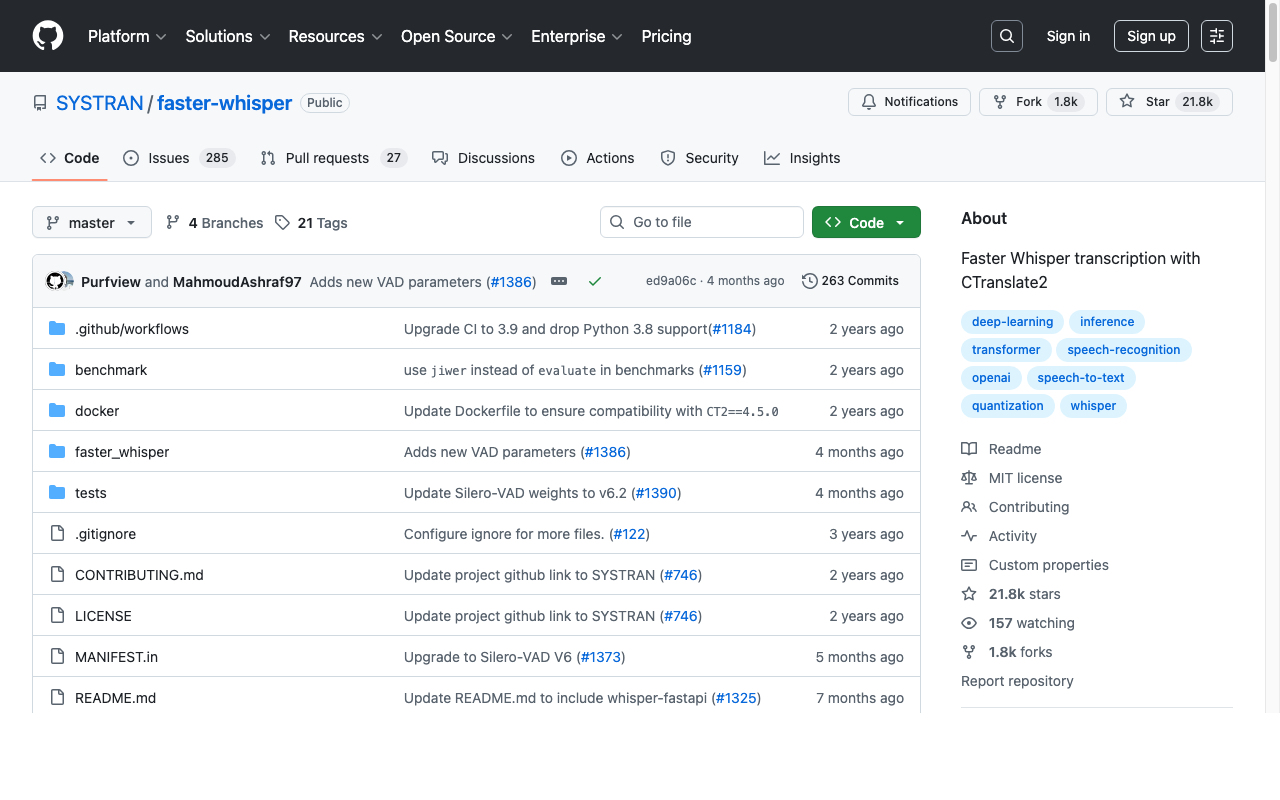

6. faster-whisper — Best Open-Source Self-Hosted Option

faster-whisper replaces Whisper's PyTorch runtime with CTranslate2, a C++ inference engine that runs Whisper models up to 4x faster with the same accuracy and lower memory usage. For teams committed to self-hosting, faster-whisper is the best way to reduce GPU costs without changing models.

Key Features

- 4x faster inference than stock OpenAI Whisper

- 8-bit quantization support (CPU and GPU) for further speedups

- Same Whisper model weights — identical accuracy

- Python API (drop-in replacement for openai-whisper)

- 14,000+ GitHub stars, active community

Pros

- 4x faster = 4x lower GPU cost per minute of audio

- Same accuracy as stock Whisper — just faster

- 8-bit quantization reduces memory requirements

- Open-source and free

Cons

- Still requires GPU infrastructure management

- No streaming support built-in (same Whisper limitation)

- No speaker diarization, summarization, or audio intelligence

- You own the scaling, monitoring, and maintenance burden

Pricing

Free (open-source). GPU costs: approximately $0.005–$0.013/min depending on instance type and utilization. At typical rates, self-hosting faster-whisper costs $30–$80/month for a single GPU instance.

Best For

Teams already self-hosting Whisper who want to cut GPU costs by 4x without changing their model or pipeline architecture.

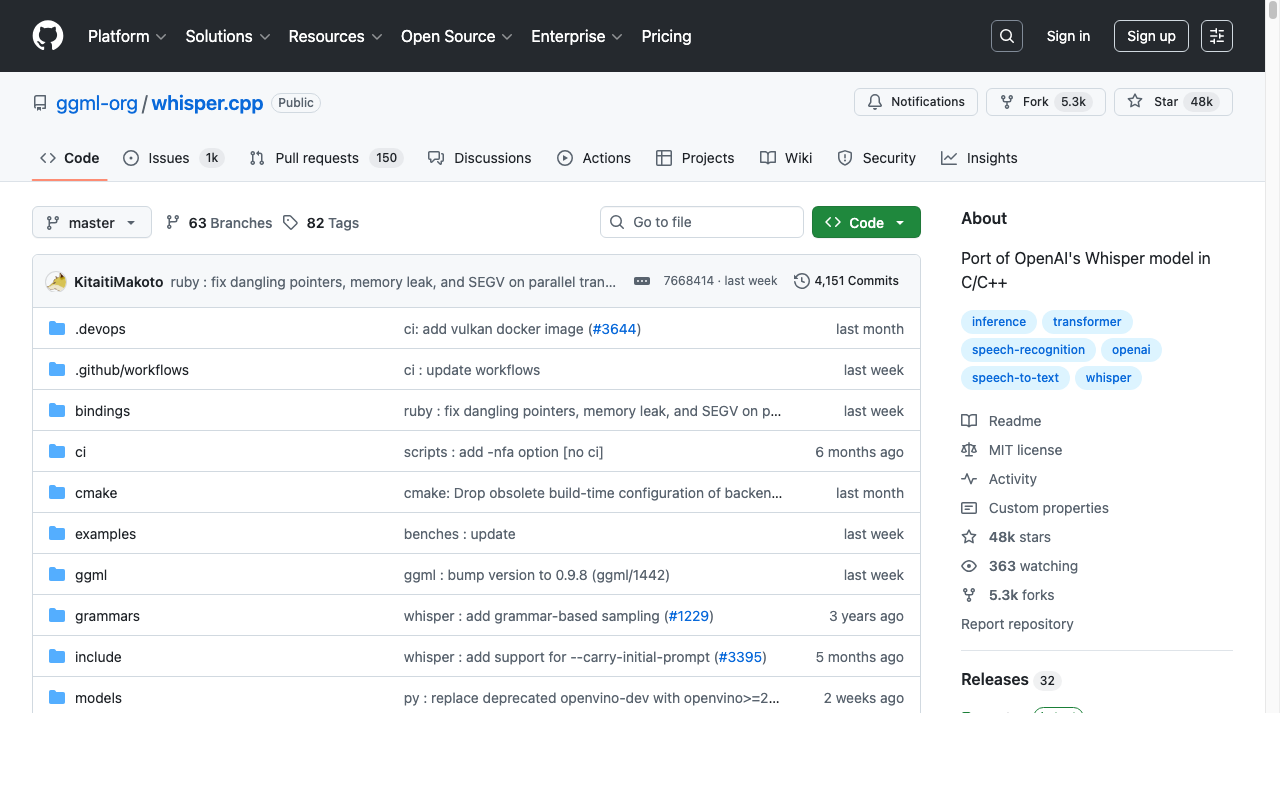

7. whisper.cpp — Best for Edge and Mobile Deployment

whisper.cpp is a C/C++ port of Whisper by Georgi Gerganov that runs efficiently on CPU, Apple Neural Engine, and mobile devices. whisper.cpp is the foundation for many consumer Whisper apps — including Mac dictation tools like Voibe that run Whisper entirely on-device. With 38,000+ GitHub stars, whisper.cpp is the most popular Whisper implementation for edge deployment.

Key Features

- C/C++ implementation — runs on CPU without GPU

- Apple Silicon optimization (ARM NEON, Metal, Core ML)

- iOS, Android, and WebAssembly support

- Quantized models for reduced size (4-bit, 5-bit)

- 38,000+ GitHub stars

Pros

- Runs on CPU — no GPU required for deployment

- Optimized for Apple Silicon (M1–M4) via Core ML and Metal

- Mobile deployment on iOS and Android

- Smallest memory footprint among Whisper implementations

Cons

- Slower than faster-whisper for GPU-based server workloads

- No streaming API built-in

- C/C++ codebase — harder to integrate than Python-based faster-whisper

- Accuracy slightly lower with heavily quantized models

Pricing

Free (open-source, MIT license). Hardware costs: runs on any CPU, optimized for Apple Silicon. Zero cloud dependency.

Best For

Edge deployment on mobile, desktop, and embedded devices where GPU access is unavailable, and for building consumer apps that run Whisper on-device.

End-user alternative: If you want a desktop dictation app that already wraps Whisper with a push-to-talk GUI, see our Handy review. Handy (MIT, ~20,000 GitHub stars) runs Whisper locally on Mac, Windows, and Linux with zero setup. Our Handy alternatives guide covers 9 options for users who want AI editing, IDE integration, or mobile support.

How to Choose the Right Whisper Alternative

Use these decision questions to select the best OpenAI Whisper alternative for your application:

Do you need real-time streaming transcription?

- Yes → Deepgram Nova-3 (fastest streaming) or AssemblyAI (with audio intelligence).

- No (batch is fine) → Any option works. Google Dynamic Batch at $0.004/min is cheapest for batch.

Managed API or self-hosted?

- Managed API → Deepgram, AssemblyAI, or your cloud provider (Google, AWS, Azure).

- Self-hosted → faster-whisper (GPU servers) or whisper.cpp (CPU/edge).

Do you need audio intelligence (summarization, sentiment, entities)?

- Yes → AssemblyAI has these built into the API.

- No → Deepgram or self-hosted options are more cost-effective.

What is your monthly audio volume?

- Under 1,000 hours → Managed APIs are most cost-effective when factoring in DevOps time.

- 1,000–10,000 hours → Compare API pricing vs self-hosted costs carefully.

- Over 10,000 hours → Self-hosting faster-whisper is likely cheaper.

Are you deploying to mobile or edge devices?

- Yes → whisper.cpp is the only option designed for on-device deployment.

- No → faster-whisper (self-hosted) or managed APIs.

Best Tool for Your Situation: Use-Case Cheat Sheet

- Building a voice assistant with live transcription → Deepgram Nova-3 streaming ($0.0077/min) — fastest real-time STT

- Transcribing podcast episodes or recorded meetings → AssemblyAI ($0.0025/min) — cheapest batch rate with summarization

- Processing 100+ languages → Google Chirp 3 — 100+ language support, best multilingual accuracy

- Medical/clinical transcription → Deepgram Nova-3 Medical or Amazon Transcribe Medical

- Already on AWS → Amazon Transcribe — native S3/Lambda integration

- Already on Azure → Azure Speech Services — managed Whisper or Microsoft models

- Already on GCP → Google Cloud STT — native BigQuery/Pub-Sub integration

- Self-hosting Whisper and want to cut GPU costs → faster-whisper — 4x speedup, same accuracy

- Deploying to iOS/Android/edge devices → whisper.cpp — CPU-optimized, mobile-ready

- Building a Mac/desktop app with on-device STT → whisper.cpp — powers apps like Voibe

- Need summarization + sentiment + transcription in one call → AssemblyAI — audio intelligence built-in

- Processing 10,000+ hours/month at lowest cost → faster-whisper self-hosted or Google Dynamic Batch ($0.004/min)

Frequently Asked Questions

Common developer questions about Whisper alternatives, organized by topic.

Whisper Basics

Is OpenAI Whisper free?

The Whisper model is open-source and free to download. Running it requires GPU hardware — cloud GPU costs $1.00–$1.60/hour. The OpenAI Whisper API costs $0.006/min as a managed service.

What replaced OpenAI Whisper API?

In March 2025, OpenAI released gpt-4o-transcribe and gpt-4o-mini-transcribe with lower error rates. OpenAI recommends gpt-4o-mini-transcribe for most transcription tasks. The original Whisper API remains available.

Comparisons

How does Deepgram compare to Whisper?

Deepgram Nova-3 offers real-time streaming (Whisper does not), 28% lower pricing for pre-recorded English ($0.0043 vs $0.006/min), and speaker diarization. Whisper is open-source and free to self-host.

Is faster-whisper better than Whisper?

faster-whisper runs Whisper models 4x faster with identical accuracy and lower memory usage via CTranslate2. It is strictly better for self-hosting — same model, faster inference.

Costs and Self-Hosting

How much does it cost to self-host Whisper?

Cloud GPU instances (e.g., AWS g5.xlarge) cost $1.00–$1.60/hour. At typical utilization, this translates to $0.02–$0.05/min of transcribed audio. Self-hosting becomes cost-effective at approximately 5,000–10,000 hours/month.

Which API has the best accuracy?

Accuracy varies by audio type. Deepgram Nova-3 leads on general English benchmarks. AssemblyAI Universal-2 performs well on noisy multi-speaker audio. Google Chirp 3 excels at multilingual content. Test against your specific audio.

Features

Which APIs support real-time streaming?

Deepgram, AssemblyAI, Google Cloud STT, Amazon Transcribe, and Azure Speech all support real-time streaming. Whisper (including faster-whisper and whisper.cpp) does not support native streaming.

The Bottom Line: Stop Maintaining Your Whisper Pipeline

Whisper was a breakthrough open-source model, but building production speech-to-text around it means solving problems that managed APIs have already solved: streaming, scaling, diarization, and infrastructure management.

For real-time streaming, Deepgram Nova-3 ($0.0043/min) is the fastest API with the best streaming performance. For audio intelligence, AssemblyAI ($0.0025/min) offers the cheapest base rate with summarization and sentiment built in. For self-hosting, faster-whisper cuts GPU costs by 4x with identical accuracy. For edge deployment, whisper.cpp runs on CPU and mobile devices.

For a consumer example of what packaged Whisper looks like, Voibe ($7.50/mo, $59/yr, or $149 lifetime) runs Whisper entirely on-device for Mac dictation — proving that Whisper's accuracy is production-ready when wrapped in the right UX.

For more technical background, read our how Whisper works guide, cloud vs local dictation comparison, and privacy guide. For consumer context on how Whisper stacks up against built-in Mac dictation, see our Apple Dictation vs OpenAI Whisper comparison. For the cloud-product side of the same supply chain — what a polished commercial dictation app built on top of speech recognition looks like — see OpenAI Whisper vs Wispr Flow (free open-source model vs $144/yr cloud product, with the full Mac Whisper-wrapper landscape: Voibe / Superwhisper / VoiceInk / Wisprtype / MacWhisper). Developers who want to use voice to prompt AI coding tools (Cursor, Claude Code, ChatGPT) should see our guide to voice-prompting ChatGPT, Claude, and Cursor — the Five-Part Voice Prompt framework (Goal / Inputs / Constraints / Example / Output) with worked examples including file-reference prompts for Cursor. For the broader workflow context, see the voice input workflow guide.

Ready to type 3x faster?

Voibe is the fastest, most private dictation app for Mac. Try it today.

Related Articles

Best Dictation Software After Hand Surgery (2026): 6 Apps for One-Handed Recovery

Compared 6 dictation apps for one-handed post-op use. Voibe's Hands-Free Mode + configurable hotkey let you keep working through carpal tunnel release, trigger finger, or Dupuytren's recovery without re-aggravating the surgical site.

Best Dictation Software for Tendinitis (2026): 6 Apps Compared

Compared 6 dictation apps for wrist tendinitis (de Quervain's, ECU, flexor tendinopathy). Voibe's Hands-Free Mode removes the held-key load other apps require. Honest paragraphs on Superwhisper, Wispr Flow, Apple Dictation, Dragon, MacWhisper.

Recovering From Hand Surgery: Typing, Voice, and Continuity (2026)

Phased recovery timeline by procedure (carpal tunnel release, trigger finger, Dupuytren's, fracture pinning), when typing safely resumes, and a step-by-step walkthrough of Voibe's Hands-Free Mode for one-handed dictation.